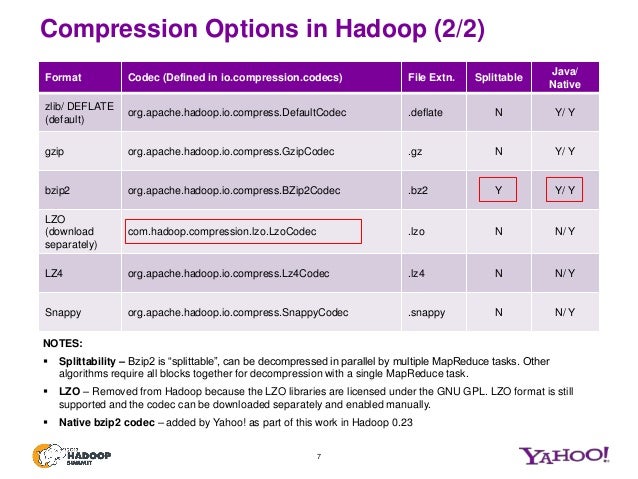

They are sorted by increasing the compression ratio using plain CSVs as a baseline. Using a sample of 35 random symbols with only integers, here are the aggregate data sizes under various storage formats and compression codecs on Windows. For instance, compared to the fastest mode of zlib, Snappy is an order of magnitude faster for most inputs, but the resulting compressed files are anywhere from 20 to 100 bigger. Then the compressed messages are turned into a special kind of message and appended to Kafka’s log file. Both took a similar amount of time for the compression, but Parquet files are more easily ingested by Hadoop HDFS. It does not aim for maximum compression, or compatibility with any other compression library instead, it aims for very high speeds and reasonable compression. A quick overview of compression in Kafka In Kafka compression, multiple messages are bundled and compressed. NET (Unofficial) Signed Snappy.NET Library. Compressed CSVs achieved a 78% compression. Snappy is a very fast compression (250 MB/sec)/decompression (500 MB/sec) library written in C++. Note currently Copy activity doesn't support LZO when read/write Parquet files. Supported types are 'none', 'gzip', 'snappy' (default), and 'lzo'. When reading from Parquet files, Data Factories automatically determine the compression codec based on the file metadata. while gzip, 7zip and snappy are designed for texts. The compression codec to use when writing to Parquet files.

Parquet v2 with internal GZip achieved an impressive 83% compression on my real data and achieved an extra 10 GB in savings over compressed CSVs. entire time series to achieve high compression ratio, but ignore local contexts around individual.

Its compression speed is 400 MB/s per core while. My goal this weekend is to experiment with and implement a compact and efficient data transport format. LZ4 is lossless compression algorithm, sacrificing compression ratio for compression/decompression speed. I have an experimental cluster computer running Spark, but I also have access to AWS ML tools, as well as partners with their own ML tools and environments (TensorFlow, Keras, etc.). My financial time-series data is currently collected and stored in hundreds of gigabytes of SQLite files on non-clustered, RAIDed Linux machines. Goal: Efficiently transport integer-based financial time-series data to dedicated machines and research partners by experimenting with the smallest data transport format(s) among Avro, Parquet, and compressed CSVs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed